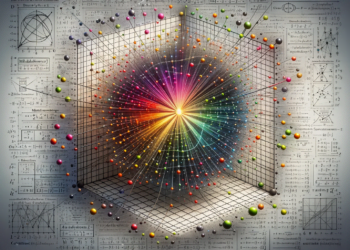

Dimensionality reduction is a crucial process in machine learning and artificial intelligence that aims to simplify the number of random variables under consideration, obtaining a more manageable set. This process is indispensable when we face the phenomenon of the curse of dimensionality, where the exponential increase in volume associated with each additional dimension can lead a model to suffer from overfitting, long training times, and computational difficulties. Recent research has focused on developing more efficient and robust methods for dimensionality reduction, allowing data scientists and AI engineers to work with high-dimensional data sets more effectively.

Traditional Methods of Dimensionality Reduction

Among the traditional methods for dimensionality reduction, principal component analysis (PCA) and factor analysis stand out. PCA is a linear extraction technique that transforms data into a new coordinate system, reducing the number of variables and highlighting the variability of the data. The main contribution of PCA is that the first principal components capture most of the variability of the original data set.

On the other hand, factor analysis seeks to model the variability among observed variables in terms of a fewer number of unobserved variables called factors. Although powerful, these methods face limitations when dealing with complex or highly nonlinear data.

Advances in Nonlinear Dimensionality Reduction

Nonlinear techniques have been developed to address the deficiencies of linear approaches. Stochastic Neighbor Embedding with a t-distribution (t-SNE) and Uniform Manifold Approximation and Projection for Dimension Reduction (UMAP) are popular methods that have proven effective for visualizing large, high-dimensional data sets in a lower-dimensional space. t-SNE, in particular, has been widely used for data visualization in computational biology, where the interpretation and visualization of data clusters are critical.

UMAP has emerged as a promising technique due to its balance between preserving global and local structure and its relatively efficient computational performance. UMAP works on the premise that data structure can be modeled through a specific differentiable topology known as the simplicial complex, offering a more formal and mathematically grounded perspective than other nonlinear methods.

Recent Applications

In the field of genomics, dimensionality reduction has enabled the analysis of single-cell sequencing data, where the number of dimensions can rise to tens of thousands of genes measured in thousands of cells. Dimensionality reduction methods such as variational autoencoders have contributed to the identification of cellular subtypes, inference of developmental trajectories, and understanding of biological heterogeneity.

In pattern recognition and computer vision, the representations generated through dimensionality reduction feed classification and clustering algorithms, allowing faster and more efficient processing of high-resolution images and videos.

Comparison with Previous Works and Future Projection

Compared to older techniques such as PCA and Linear Discriminant Analysis (LDA), new dimensionality reduction methods offer significant advantages in preserving the nonlinear structure of data, which translates into better performance in subsequent machine learning tasks. However, these methods are not without challenges, one of which is the interpretability of the results, an aspect that requires further attention and future research.

The future projection of dimensionality reduction suggests a multidisciplinary approach, integrating knowledge from topology, statistics, and deep learning. It is expected that the introduction of AI techniques like generative neural networks and contrastive encoders will further improve the quality of reduced data representations and their applicability to complex high-dimensional data problems.

Case Studies

Bioinformatics case:

A recent study used UMAP to characterize the heterogeneity of melanoma cells from sequencing data. The method allowed the identification of cellular subpopulations, thus offering new avenues for targeted therapies based on the genomic profiles of melanoma cells.

Natural language processing (NLP) case:

The implementation of autoencoders in NLP has enabled the compression of textual data to improve the efficiency of language models, such as Transformers, redefining the state of the art in text comprehension and automatic translation tasks.

Conclusion

Dimensionality reduction occupies a central place in artificial intelligence, allowing us to navigate through complex data landscapes and extract the essence of massive information for a variety of practical applications. As we expand our understanding and mastery of these techniques, the possibilities for future innovations in AI become exponentially greater. Nevertheless, the scientific community remains challenged by the aspects of explainability and robustness of these methods, representing a vibrant horizon for research and the application of artificial intelligence.