Foundations and Evolution of Maximum Likelihood Estimation in Machine Learning

Maximum Likelihood Estimation (MLE) has established itself as a cornerstone in parameter estimation within statistics and, by extension, in machine learning. This technique, introduced by Ronald A. Fisher in 1922, focuses on selecting parameter values for a statistical model that maximize the likelihood function, that is, making the observed sample more probable.

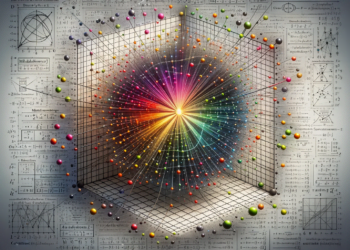

Mathematical Formulation and Optimization

The likelihood function, generally denoted as L(θ|x), where θ represents the parameter vector and x denotes the observed data, is defined as the probability of the data given the parameters. In a continuous context, this is equivalent to the probability density function evaluated at the observed data. The MLE approach seeks the value of θ that maximizes L(θ|x).

Optimizing this likelihood function often leads to the use of algorithms like the Newton-Raphson method or the Expectation-Maximization (EM) algorithm for more complex models where the likelihood cannot be easily maximized directly.

Computational Challenges and Modern Solutions

One of the primary challenges of MLE lies in its computationally intensive nature, especially in high-dimensional data realms. Here, high-dimensional gradients and Hessians require efficient handling. Modern techniques make use of stochastic approximations and adaptive optimization algorithms like Adam or RMSprop, which adjust the learning rate based on first and second-moment estimates.

Additionally, regularization has become important to prevent overfitting in parameter estimation by adding a penalty term to the likelihood function, thus balancing the model complexity and fitting to the data.

Extensions and Current Applications

Deep Neural Networks (DNNs), structuring multiple layers of nonlinear transformations, have surpassed the effectiveness of other models in a wide variety of complex tasks, from speech recognition to medical image interpretation. Despite their intricate architecture, MLE remains a cornerstone for training DNNs through minimizing the cross-entropy cost function, which is a representation of likelihood in classification contexts.

A significant expansion of MLE in machine learning is the Bayesian variant, the Maximum A Posteriori Estimation (MAP), which incorporates prior knowledge through a prior distribution, harmonizing the data likelihood with previous expectations.

Case Studies: Innovation in MLE

A case study of note in the synthesis of MLE with contemporary methodologies is found in the area of generative deep learning, specifically in Generative Adversarial Networks (GANs). In GANs, the optimization of likelihood is carried out through a game approach where one network, the generator, learns to produce synthetic data while another network, the discriminator, assesses their likelihood.

Another case study involves Gaussian Processes (GPs), where MLE is used for the optimization of hyperparameters of a model that defines distributions over functions. GPs have been effectively employed in modeling uncertainty and performing non-parametric Bayesian inferences.

Future and Emerging Directions

Looking to the future, the confluence of MLE with reinforcement learning methods and multi-agent systems presents fascinating possibilities. Recent research explores how agents can learn to act in complex environments by maximizing a reward signal, a natural extension of likelihood in dynamic contexts.

Population-based optimization techniques, such as evolutionary algorithms, introduce variations in the notion of likelihood, where a population of solutions competes and adapts, guided by their suitability to the problem environment, a biological metaphor for likelihood.

Conclusion

In summary, the utility of maximum likelihood estimation within machine learning transcends its statistical origin, providing a robust framework for training and inference across a diversity of models. Continual adaptation and the integration of new optimization methods ensure its ongoing applicability and innovation in a rapidly evolving field. With its capacity to merge statistical principles with cutting-edge computational strategies, MLE remains an indispensable component in the data scientist’s and AI researcher’s toolbox, maintaining an ideal balance between rigorous theory and practical applicability.