In the contemporary realm of Machine Learning (ML), one of the most significant contributions has been the development of advanced techniques for learning representations, known as representation learning. These techniques aim to transform data into suitable formats that facilitate the efficiency of algorithms in pattern detection and decision-making. This field has evolved from early methods of manual feature extraction to recent advances in deep learning, applying to both structured and unstructured information, from images and audio to text and genetic signals.

The theoretical foundation of representation learning focuses on the notion that observed data are a manifestation of underlying latent variances, fundamental aspects that are attempted to be modeled and interpreted. The quality of a representation is measured by how easily a subsequent ML task, such as classification, regression, or clustering, can be performed after having transformed the raw data into computationally digestible representations.

Deep Neural Networks: Deep Neural Networks (DNNs) have been cornerstones in the generation of representations, learning hierarchies of features with great success. With structures like Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), milestones have been reached in visual recognition and natural language processing (NLP), respectively. The incorporation of units like Long Short-Term Memory (LSTMs) and Gated Recurrent Units (GRUs) have made it possible to capture long temporal dependencies in data sequences.

Transformers: The emergence of transformers, originating with the seminal work “Attention Is All You Need” by Vaswani et al. in 2017, marked the beginning of an era where attention became the essential mechanism for capturing global relationships in data. This model has proven to be extraordinarily effective, especially in the field of NLP with developments like BERT (Bidirectional Encoder Representations from Transformers), GPT (Generative Pretrained Transformer), and T5 (Text-to-Text Transfer Transformer), transforming the approach to language understanding and text generation.

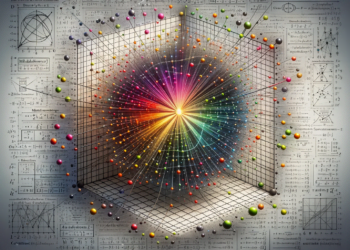

Contrastive Learning: Recently, contrastive learning in the context of unsupervised learning has gained prominence. Through this approach, representations are learned by forcing positive examples to be close to each other in the representation space while distancing negative ones. This method has achieved remarkable advances in tasks where labels are scarce or non-existent, enabling applications in domains like visual representation learning.

Neuro-Symbolism: Neuro-symbolism is an emerging perspective that combines the generalization and efficiency of deep learning with the interpretability and structure of symbolic processing. It seeks to overcome the limitations of DNNs, such as the lack of causal understanding and the difficulty in incorporating prior knowledge. Proposals like Symbolic Neural Networks and the integration of reasoning modules within the network architecture promise more robust and generalizable learning.

Transfer and Multi-Task Learning: Transfer learning and multi-task learning are strategies that seek to improve the efficiency of representation learning by leveraging knowledge from related tasks. This is evident in systems where pre-trained models on large datasets are fine-tuned for specific tasks, thus optimizing generalization and computational economy.

Fine-Tuning and Representation Adjustment: The fine-tuning technique involves adjusting a pre-trained model for a specific task and is fundamental in practical applications. A notable example is the fine-tuning of transformer models in NLP for specialized domains, such as legal or medical, improving the model’s ability to capture jargon and nuances of each field.

Generalization and Robustness: A current focus in representation research is on generalization and robustness against adversarial examples. Mechanisms for regularization, such as batch normalization and dropout, are being investigated, along with specific training methods that increase the robustness of the obtained representations.

Ethics and Bias: With the increasing capability of these technologies, ethical concerns related to bias and fairness arise. Methods are beginning to be developed to detect and mitigate biases in learned representations, thus ensuring a positive social impact.

The future of representation learning seems oriented towards greater integration between models based on massive data and techniques that incorporate domain knowledge and causal understanding. The combination of large volumes of data with highly expressive models, such as the latest generations of transformers, with techniques that explain and reason about the learned representations, is at the forefront of current research and promises developments that will further narrow the gap between artificial and human intelligence.